/btw which project am I?

“What have you got to lose if you do your best? It doesn't matter what the result is. If you go for it and your head is in the right place, there's nothing to worry about.” -- Tadej Pogačar, 2019

How magj.dev Grew Up

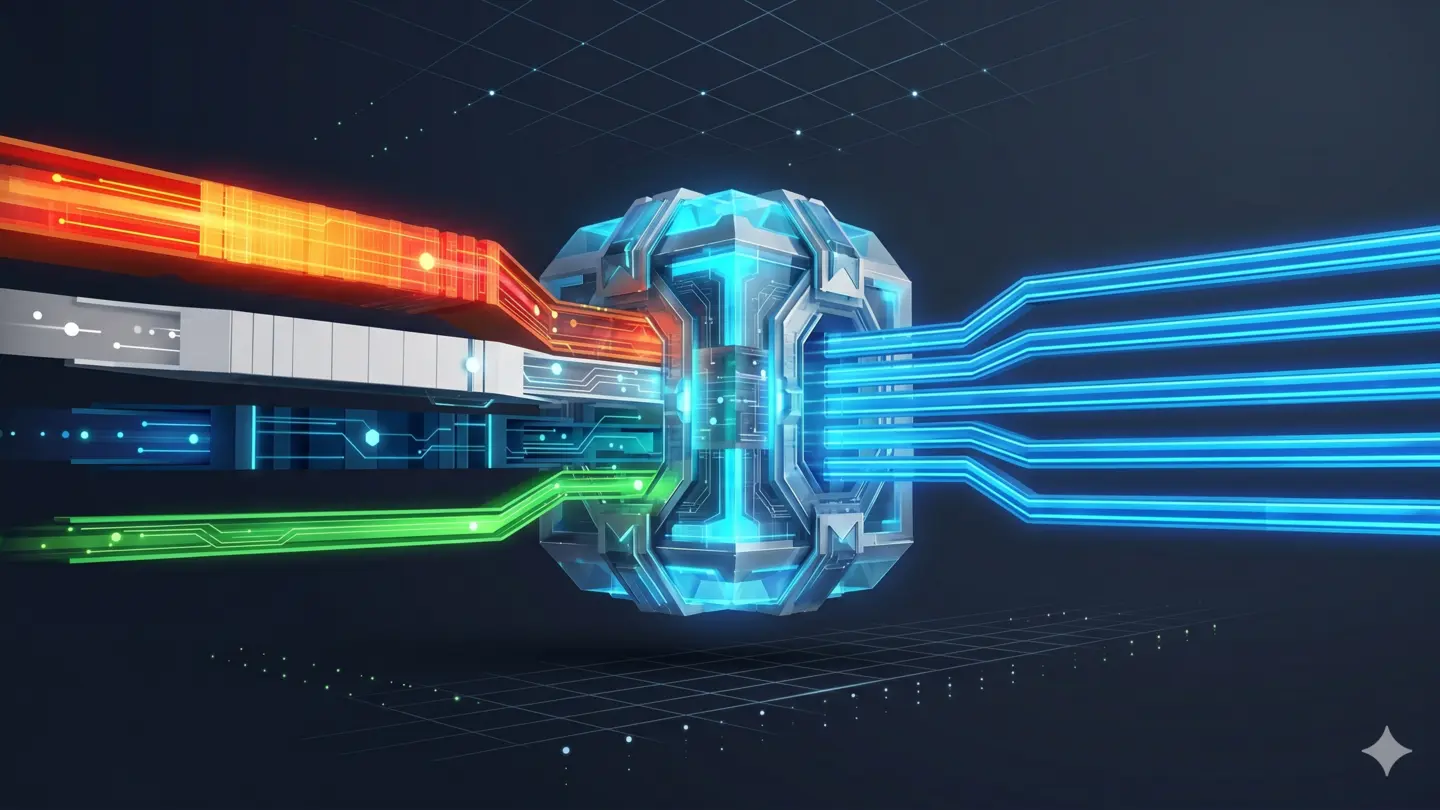

One Gateway to Rule Them All

Why Executable Binaries Deserve More Attention in Information Systems