Blobs: What They Are and Why They Matter

In software, the word blob usually stands for Binary Large Object. At first glance, it sounds like one of those vague terms the industry adopted because it was convenient rather than elegant. In practice, that is almost exactly what happened.

A blob is, essentially, a chunk of data stored and treated as a single object. That data may be an image, a PDF, a video, an audio file, a backup archive, a machine learning model, or any other payload that does not fit neatly into traditional rows and columns. Instead of breaking the content into structured fields, the system stores the whole thing as one opaque unit.

That single idea appears everywhere in modern systems.

Why blobs exist

Relational databases are excellent at structured data. They shine when the data has clear fields, relationships, constraints, and query patterns. A customer has a name, an email, a status, a created date. An order has items, totals, and references to other entities. This is the world of schemas, joins, indexes, and predictable access patterns.

But not everything behaves that way.

A JPEG image is not meaningfully queried with SQL predicates like a customer record is. A video file does not become more useful because it is split into dozens of relational columns. A zip archive is useful precisely because it remains intact. For data like that, systems needed a way to say: store this thing as-is, keep it safe, and let me retrieve it later.

That is where blobs became important.

A simple mental model

A good way to think about blobs is this:

- Structured data answers questions like “Which customer placed the most orders this month?”

- Blob data answers questions like “Give me the PDF the customer uploaded.”

One is optimized for interpretation and querying. The other is optimized for storage and retrieval of raw content.

That distinction matters because many real-world applications need both at the same time.

A document management system may store metadata such as title, owner, upload date, and tags in a database, while the actual file contents live as blobs in an object store. An e-commerce platform may keep product records in a relational database, but images in blob storage. A machine learning platform may track experiment metadata in tables while saving model artifacts, checkpoints, and datasets as blobs.

Blobs in databases

Historically, the term “blob” was commonly associated with databases. Many relational database engines introduced BLOB columns to hold large binary values directly inside a table.

This works, and sometimes it is the right choice. It keeps the binary content close to the record it belongs to. Transactions can be simpler. Backups capture everything in one place.

But the approach also has trade-offs.

Large binary payloads can make the database heavier, backups slower, replication more expensive, and query performance less predictable. Databases are usually not the cheapest or most scalable place to store huge volumes of media, archives, or large documents. As systems grow, teams often decide to keep only metadata in the database and move the file contents to external object storage.

That architectural shift is extremely common.

Blob storage in the cloud

In modern cloud architectures, “blobs” are often associated less with database columns and more with object storage.

Services like Azure Blob Storage, Amazon S3, and Google Cloud Storage are designed to store massive numbers of objects efficiently, durably, and relatively cheaply. They are ideal for unstructured content: images, logs, backups, exports, documents, datasets, static website assets, and more.

This is one of the reasons the term blob remains so relevant. It evolved from a database concept into a broader architectural pattern.

In cloud systems, a blob typically has:

- a unique identifier or path

- binary content

- metadata

- access rules

- storage tier or lifecycle settings

This is deceptively powerful. Once you can store arbitrary content as objects, you unlock a large part of what modern applications need in production.

Common use cases

Blobs are everywhere, even when teams do not explicitly call them that.

A few examples:

User-uploaded content

Profile pictures, attachments, PDFs, resumes, invoices, and media files are all natural blob candidates.

Static assets

Modern web applications often serve images, fonts, downloadable bundles, and generated reports from object storage.

Backups and archives

Database dumps, compressed exports, and disaster recovery artifacts are a perfect fit for blob storage.

Data engineering and analytics

Raw ingestion files, parquet datasets, CSV exports, and staging artifacts are frequently stored as blobs before further processing.

AI and machine learning

Training datasets, vector indexes, checkpoints, prompts archives, embeddings snapshots, and serialized models often live in object storage.

Logging and observability

Some systems persist large log bundles, traces, or diagnostic packages as blobs for later analysis.

Why blob storage became foundational

Blob-oriented storage solved several practical problems at once.

First, it separated large unstructured content from transactional application data. That gave architects more freedom to scale each part independently.

Second, it made storage economics better. Object storage is generally much cheaper than using a primary relational database as a giant file repository.

Third, it improved durability and distribution. Modern object stores are designed for high durability, replication, lifecycle policies, and integration with content delivery networks.

Fourth, it aligned well with APIs. Applications can upload a blob, store its reference in a database, and later stream it to clients, process it asynchronously, or move it across environments.

That model is simple, scalable, and easy to reason about.

The architecture pattern that usually works best

A very common design looks like this:

- Store the file itself in blob or object storage.

- Store metadata about that file in a database.

- Keep only a reference to the blob in the relational record.

- Apply lifecycle, retention, security, and processing policies at the storage layer.

This gives you the best of both worlds.

The database remains good at search, filtering, relationships, and business logic. The storage layer remains good at holding large binary payloads. Each component does what it is best at.

That separation is one of those architectural decisions that seems minor early on and becomes very important later.

Blobs are “opaque,” and that matters

One subtle but important characteristic of blobs is that, by default, systems treat them as opaque. That means the storage layer usually does not understand the semantic meaning of the content. It knows it is storing bytes. It may know metadata, content type, size, and timestamps. But it does not inherently know that the blob is a contract, a face in a photo, or a model checkpoint.

This is both a limitation and a strength.

It is a limitation because you need additional services to index, search, transform, or extract meaning from the content.

It is a strength because the storage system stays generic, scalable, and broadly useful.

In modern AI systems, this becomes even more interesting. A blob may start as “just bytes,” but downstream pipelines can extract text, generate embeddings, classify content, detect entities, or enrich metadata. In that sense, blobs often represent the raw material from which higher-value knowledge is built.

The storage layer may treat the blob as opaque bytes, but metadata is what increasingly gives those bytes identity, context, and operational meaning.

The evolution of metadata around blobs

There is another reason blobs became so important: over time, the industry learned that raw binary content alone is rarely enough.

In the early days, a file often carried very little context with it. Much of its meaning was inferred from simple conventions such as the file name and especially the file extension. If something ended in .jpg, it was probably an image. If it ended in .pdf, it was a document. If it ended in .zip, it was an archive. That was often sufficient for humans and for many operating systems.

But that model was limited.

A file extension is only a hint. It tells you very little about the object beyond a rough guess of its format. It does not tell you who uploaded it, when it was created, whether it is encrypted, what business entity it belongs to, whether it contains sensitive information, how long it should be retained, which version it is, or whether it has already been processed by downstream systems.

As software systems became more distributed, cloud-native, automated, and compliance-sensitive, that thin layer of meaning stopped being enough.

So metadata evolved from something incidental into something foundational.

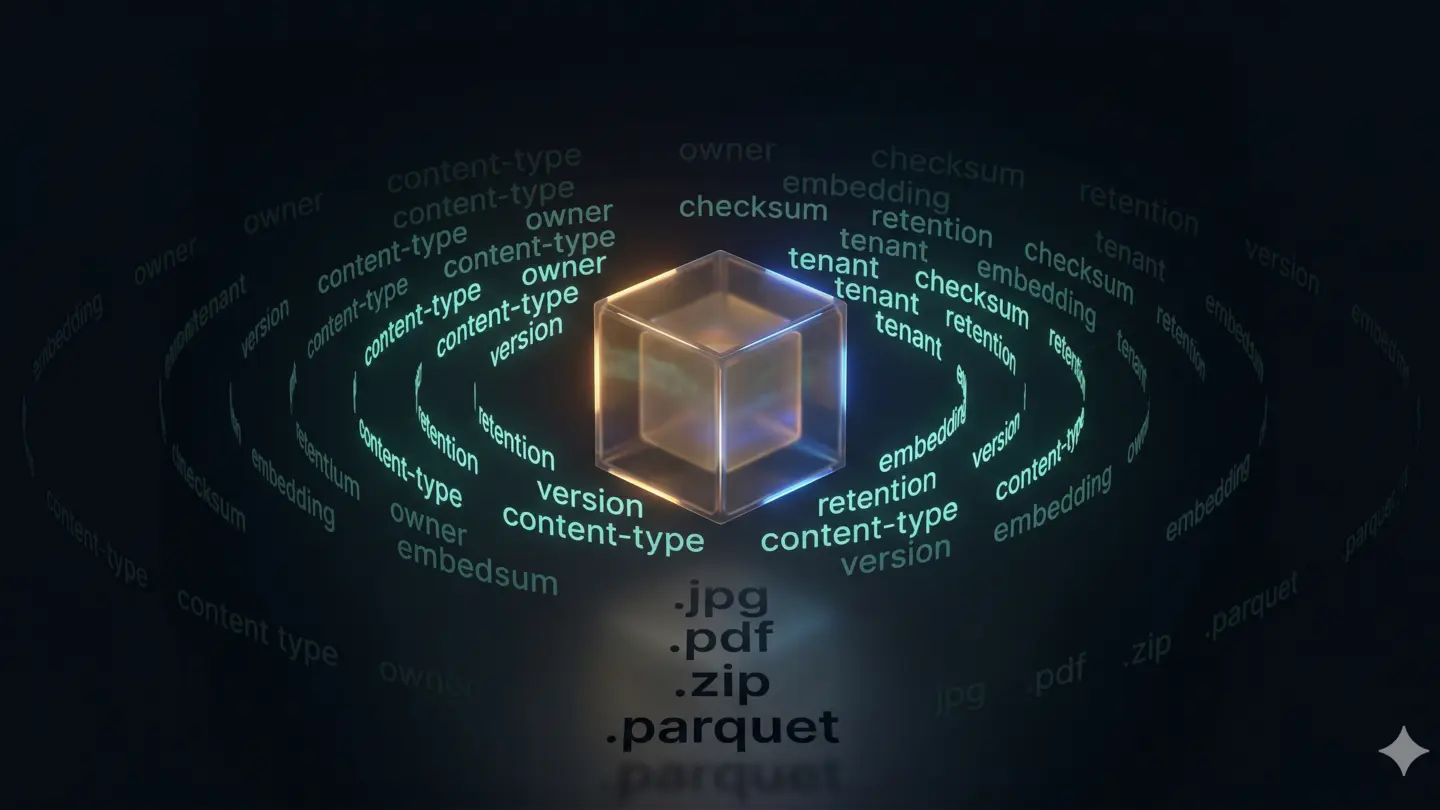

Today, when we store a blob, we often store a rich envelope of metadata around it. That metadata may include content type, size, checksum, owner, creation time, last modification time, version number, tenant, region, retention policy, security classification, access control information, processing status, tags, lineage, and references to related business entities. In AI and data platforms, it may also include extracted text, embeddings references, document category, confidence scores, language, source system, or enrichment state.

This changed the role of blobs dramatically.

A blob used to be a passive binary object. Now it is often part of a larger information system where metadata gives the blob operational, business, and even semantic meaning. The binary content is still the core payload, but the metadata is what makes that payload governable, searchable, traceable, and useful at scale.

That distinction is critical.

In many modern architectures, the most valuable part of blob management is not just storing the bytes safely. It is maintaining accurate metadata so the system knows what the object is, where it came from, who can access it, what should happen to it, and how it fits into a broader workflow.

This is why metadata matters so much today.

Without metadata, blob storage becomes a pile of files.

With metadata, blob storage becomes an asset catalog, a document platform, a media pipeline, a compliance system, a knowledge base, or a machine learning artifact repository.

In other words, the blob holds the content, but metadata increasingly holds the context.

And in modern systems, context is often what turns stored data into usable information.

The challenges with blobs

Blobs are useful, but they are not effortless.

Large object storage introduces design concerns such as:

- naming conventions

- versioning

- access control

- encryption

- retention policies

- lifecycle management

- replication

- consistency expectations

- upload and download performance

- cleanup of orphaned objects

One common mistake is to focus only on “where to put the file” and forget governance. Over time, poorly managed blob storage can become a graveyard of forgotten artifacts, duplicate uploads, inconsistent naming, and untracked costs.

Another common issue is transactional consistency. Suppose an application uploads a blob successfully but fails before saving its metadata record. Now the system has an orphaned file. The inverse can happen too: metadata exists, but the blob was never written correctly. These edge cases matter in production systems.

Good blob architecture includes cleanup strategies, idempotency, and clear ownership rules.

Blobs and performance

Blob storage is great for durability and scale, but it is not always the best choice for every low-latency access pattern. Architects still need to think carefully about caching, CDN integration, streaming, chunked uploads, and regional placement.

A small image requested globally by users should probably sit behind a CDN. A massive archive may need multipart upload support. A long-running video stream may need a delivery strategy different from a simple file download. A data pipeline may favor append-friendly formats in a data lake rather than arbitrary raw blobs everywhere.

The word “blob” sounds simple, but production usage is a serious systems design topic.

Blobs in everyday developer language

What is interesting about “blob” is that developers often use the term with slightly different meanings depending on context.

In a database discussion, it may mean a BLOB column type.

In cloud architecture, it usually means an object stored in an object storage service.

In programming APIs, a Blob object may refer to binary data handled in memory, such as in browsers or JavaScript runtimes.

In version control systems, like Git, a blob is a stored file object representing file contents.

These are related ideas, not identical ones. The shared concept is still “a unit of raw content stored as one object.”

Why developers should care

Blobs matter because almost every modern application eventually handles unstructured data.

Even simple systems grow into this. A business app begins with forms and tables, then someone wants attachments. Then exports. Then images. Then generated reports. Then backups. Then analytics files. Then AI artifacts.

Before long, blob handling is not a side concern anymore. It becomes part of the platform.

Understanding blobs helps developers make better decisions about storage architecture, system boundaries, cost, performance, and operational hygiene. It also helps avoid a common anti-pattern: forcing every kind of data into the database just because the database is already there.

Final thought

Blobs are one of those deeply practical concepts in computing. They are not glamorous. They do not sound academic. They rarely get spotlighted in architecture diagrams. But they quietly support a huge portion of the modern software world.

Whenever a system needs to store something large, binary, unstructured, or simply better kept intact, blobs enter the conversation.

And once you notice them, you start seeing them everywhere.