The complete history of HTTP, from a NeXT cube to QUIC

HTTP is arguably the most important protocol ever designed. It powers every website you visit, every API call your apps make, and every cat video you stream. But what started as a one-line text protocol on a NeXT computer at a physics lab in Geneva has evolved through five major versions over 35 years — each one reshaping how the internet works. Let’s trace that entire journey, from Tim Berners-Lee’s “vague but exciting” memo to the QUIC-powered future we’re living in right now.

It all started with a memo nobody understood

On March 12, 1989, a British physicist named Tim Berners-Lee submitted a document to his manager at CERN titled “Information Management: A Proposal.” His manager, Mike Sendall, scribbled on the cover page what might be the most consequential margin note in computing history: “Vague but exciting.”

The problem Berners-Lee wanted to solve was deceptively mundane. CERN employed thousands of people, but the turnover was brutal — two years was a typical stay. Knowledge evaporated constantly. Technical details of past projects were lost forever, or recovered only after frantic detective work during emergencies. As Berners-Lee wrote: “The information has been recorded, it just cannot be found.” CERN’s real organizational structure was a tangled web of interconnections that no hierarchical file system or keyword search could model.

Berners-Lee’s breakthrough was elegantly simple: marry hypertext to the Internet. Hypertext — the idea that documents could contain clickable links to other documents — had been theorized since Vannevar Bush’s 1945 essay and explored by Ted Nelson and Douglas Engelbart. The Internet already existed as an academic network. But nobody had connected the two. Berners-Lee had pitched this combination to people in both the hypertext and networking communities, and nobody bit. So he built it himself.

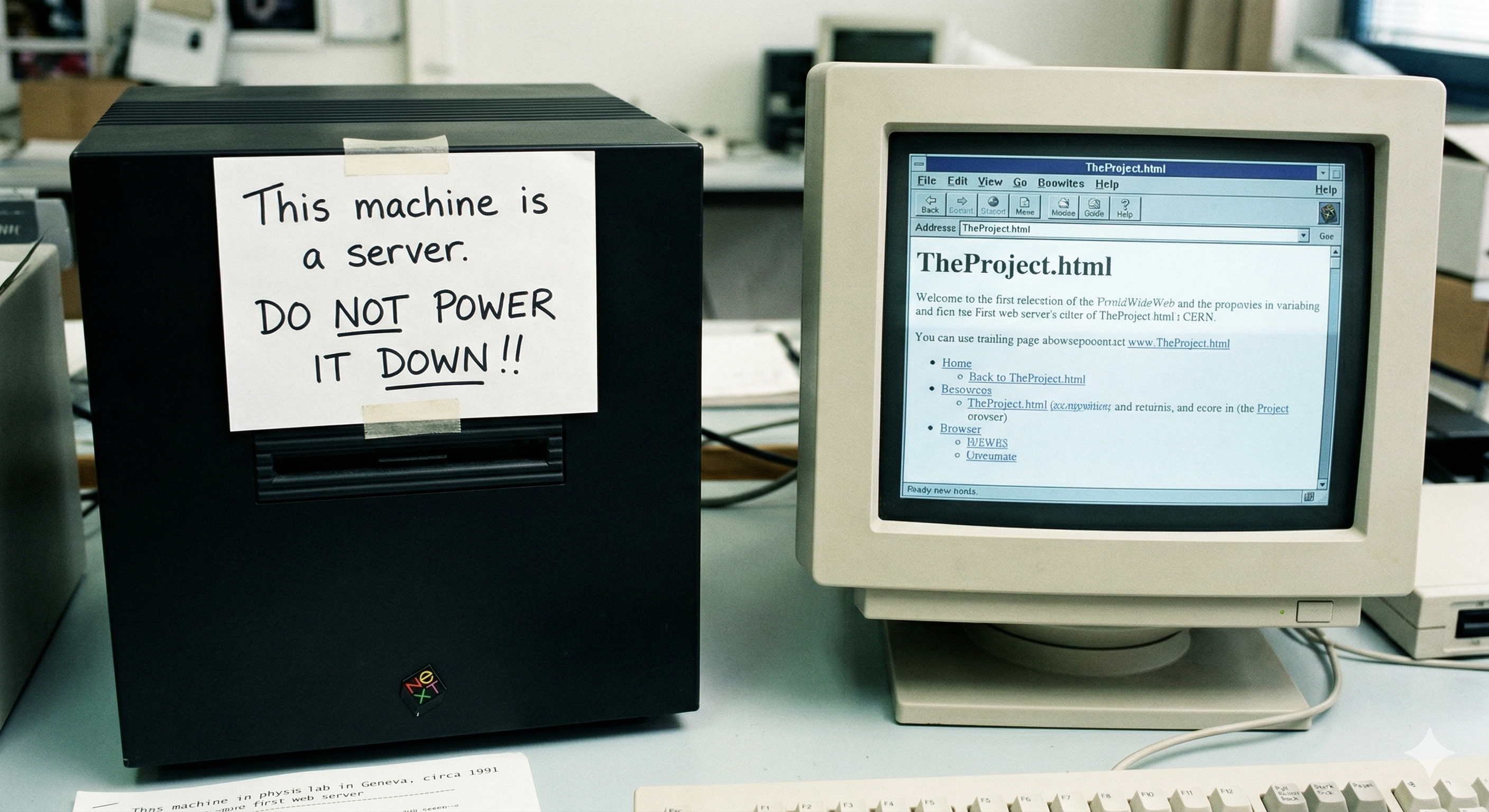

By Christmas 1990, working on a NeXT workstation, he had all four pieces running: the first web browser (called WorldWideWeb, later renamed Nexus), the first web server (CERN httpd), HTML, and HTTP. He stuck a label on the NeXT machine reading “This machine is a server. DO NOT POWER IT DOWN!!” — and the World Wide Web was born.

He wasn’t alone. Robert Cailliau, a Belgian engineer at CERN, had independently proposed a hypertext project and became Berners-Lee’s key partner in promoting the system internally. Nicola Pellow, a student at CERN, built the first cross-platform Line Mode Browser in early 1991. On August 6, 1991, Berners-Lee posted about the WWW on the alt.hypertext Usenet newsgroup — the web’s official public launch. And on April 30, 1993, CERN made the decision that changed everything: they released the World Wide Web software royalty-free. Berners-Lee had rejected suggestions to patent the technology. The web would belong to everyone.

HTTP/0.9 was a one-line protocol, and that was the point

The original HTTP — later retroactively called HTTP/0.9 since it had no version number — was absurdly simple. The entire protocol worked like this: open a TCP connection, send a single line like GET /path/to/page, receive a raw HTML document, and the connection closes. That’s it. No headers. No status codes. No content types. No POST method. Only GET. Only HTML. If something went wrong, the server sent back an HTML page describing the error.

You could literally test it with telnet:

$ telnet info.cern.ch 80

GET /hypertext/WWW/TheProject.html

<HTML>...</HTML>

(connection closed)This simplicity was intentional and strategic. As Ilya Grigorik notes in High Performance Browser Networking: “The original HTTP proposal by Tim Berners-Lee was designed with simplicity in mind as to help with the adoption of his other nascent idea: the World Wide Web.” The protocol was so trivially simple that anyone could implement a client or server in hours. The barrier to entry was essentially zero. By late 1993, there were over 500 known web servers running worldwide. The strategy worked.

But the web was growing fast. NCSA Mosaic launched in 1993, Netscape Navigator in December 1994. The W3C was founded. ISPs like CompuServe and AOL started offering dial-up access. People wanted to send images, forms, and files — not just HTML pages. HTTP/0.9 wasn’t going to cut it.

HTTP/1.0 gave the web a proper vocabulary

RFC 1945, published in May 1996 by Tim Berners-Lee, Roy Fielding, and Henrik Frystyk Nielsen, formalized HTTP/1.0. Technically it was an “Informational” RFC — descriptive rather than prescriptive — documenting the common patterns that had already emerged across dozens of incompatible implementations.

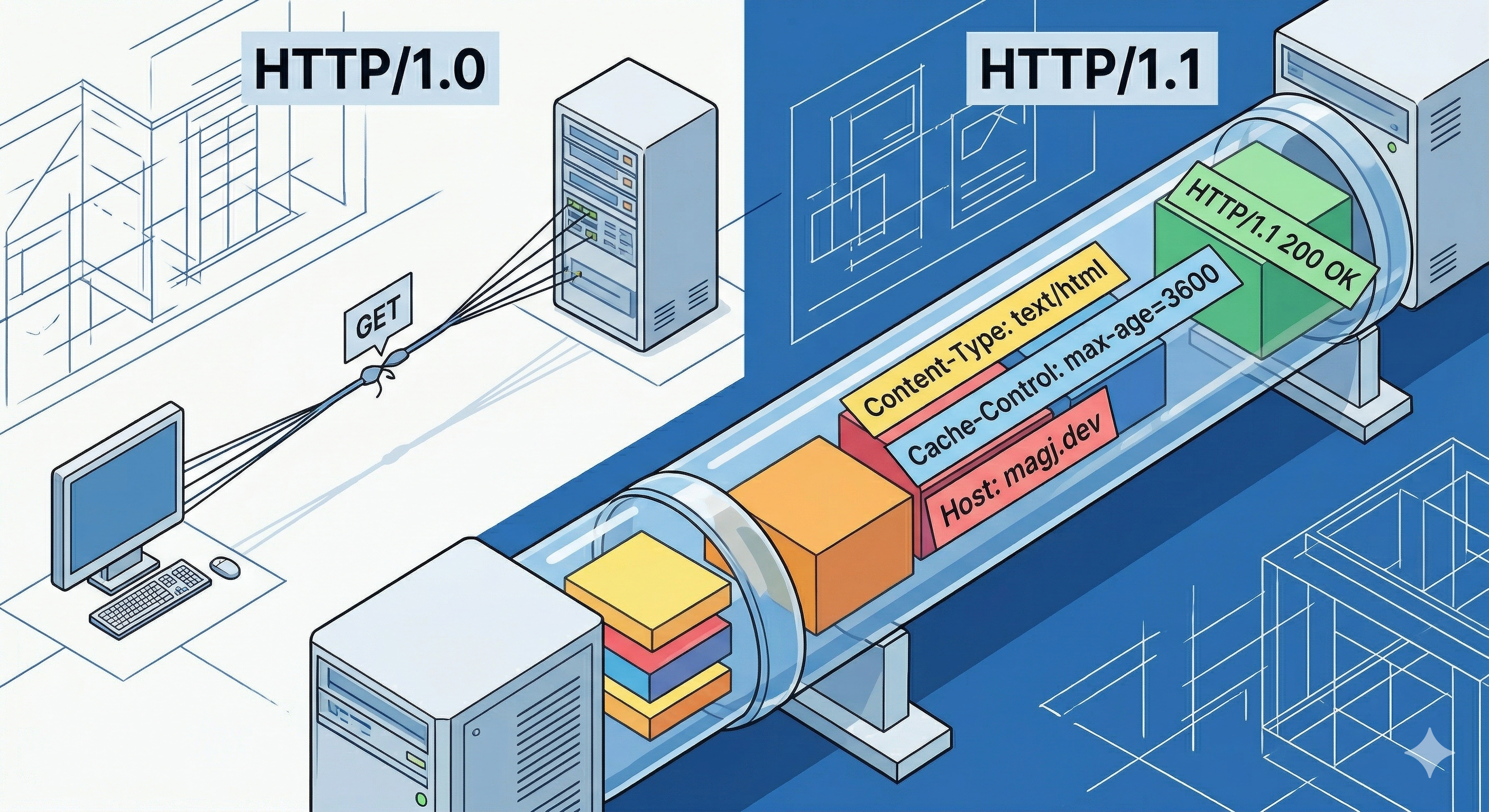

The changes were foundational. Requests now included a version number (GET /page HTTP/1.0). Responses included status codes (HTTP/1.0 200 OK). Both requests and responses carried headers — metadata like Content-Type, Content-Length, and Date. POST and HEAD methods were added alongside GET. Suddenly HTTP could carry any media type, not just HTML. Despite the “Hypertext” in its name, HTTP quietly became a general-purpose hypermedia transport.

The key limitation of HTTP/1.0 was brutal: every single request required a new TCP connection. Want an HTML page with 20 images? That’s 21 TCP handshakes. Each one incurs the overhead of TCP’s three-way handshake and slow-start algorithm. As web pages grew richer, this became an increasingly serious performance problem.

Let’s talk about the people behind these early RFCs, because they shaped the web’s architecture in ways we still feel today. Roy Fielding, born in 1965 in Laguna Beach, California, was the primary driving force behind HTTP’s evolution. He co-founded the Apache HTTP Server project and co-authored RFC 1945. His PhD dissertation at UC Irvine in 2000, “Architectural Styles and the Design of Network-based Software Architectures,” defined REST (Representational State Transfer) — which wasn’t about building APIs on top of HTTP but about articulating the architectural principles that guided HTTP itself. He used REST constraints to make specific design decisions, like rejecting a proposal for batched MGET/MHEAD methods in favor of persistent connections.

Henrik Frystyk Nielsen, born in 1969 in Copenhagen, was Berners-Lee’s first graduate student at CERN. He shared an office with Håkon Wium Lie (the co-creator of CSS), worked on the CERN httpd server and the libwww library, co-authored RFC 1945, and became the W3C’s HTTP Activity Lead. He managed the development of HTTP/1.1.

HTTP/1.1 was “good enough” for 18 years

RFC 2068 arrived in January 1997, authored by Fielding, Jim Gettys, Jeffrey Mogul, Frystyk, and Berners-Lee. It was revised in June 1999 as RFC 2616 (adding Larry Masinter and Paul Leach as authors), and finally reorganized into six focused documents — RFC 7230 through 7235 — in June 2014. That last revision wasn’t a new protocol version; it split the monolithic spec into manageable pieces and separated HTTP semantics from wire format, which proved strategically important because it let HTTP/2 define a new transport while reusing the same semantic definitions.

HTTP/1.1 introduced several features that made it remarkably durable. Persistent connections became the default — connections stayed open for multiple requests instead of closing after each one. Chunked transfer encoding let servers stream responses before knowing the total content length. The mandatory Host header enabled virtual hosting (multiple websites on a single IP address), which was critical as IPv4 addresses grew scarce. Improved cache controls via the Cache-Control header replaced the limited Expires approach. And byte-range requests enabled resumable downloads.

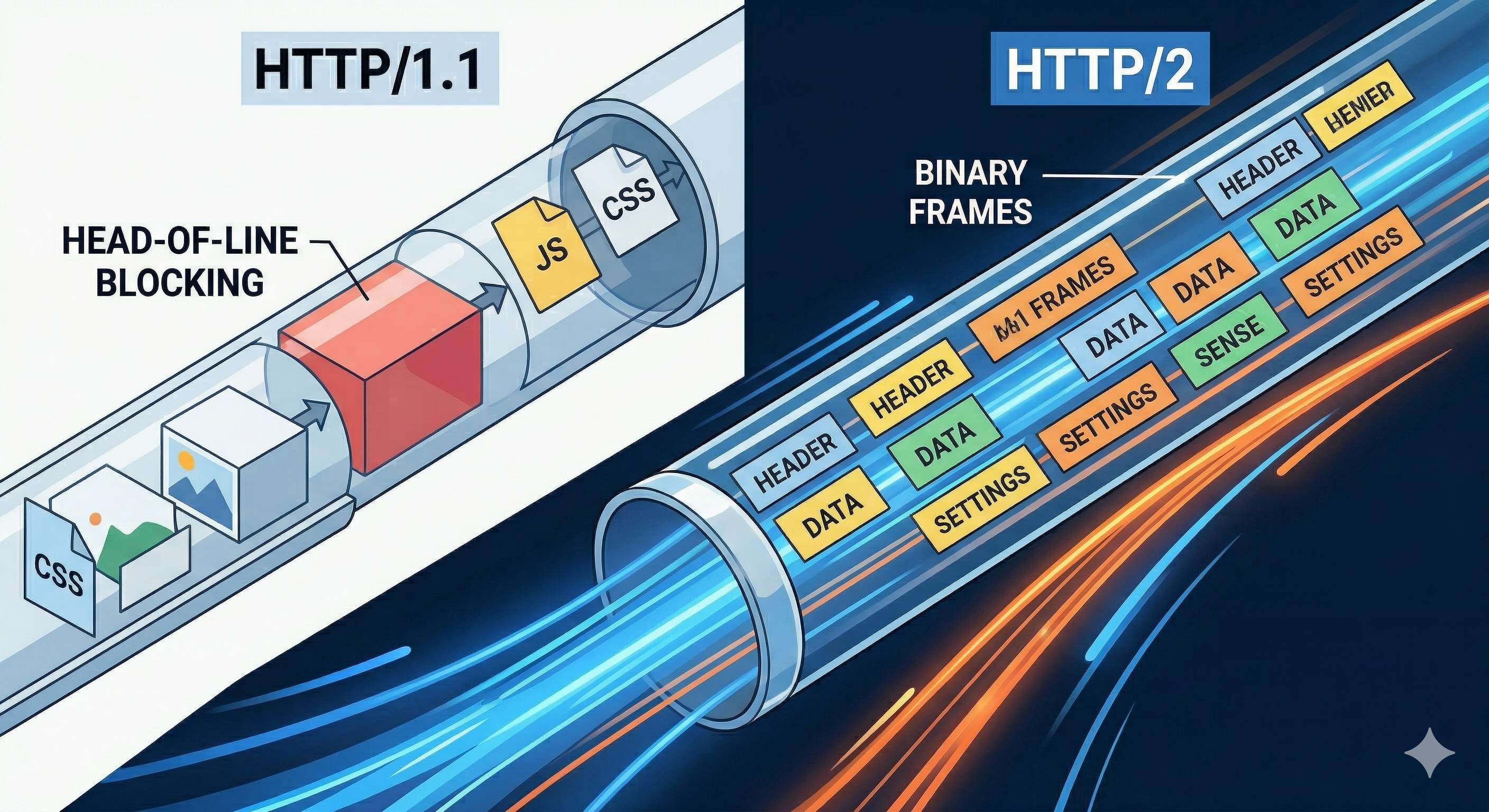

HTTP/1.1 also defined request pipelining — sending multiple requests without waiting for responses. In theory, this would solve the serialization problem. In practice, pipelining was a disaster. Responses still had to come back in order (head-of-line blocking), proxy servers handled it poorly, and most browsers quietly disabled it.

So why did HTTP/1.1 dominate from 1997 to roughly 2015? Because its extensibility made it adaptable without requiring version bumps. New headers and methods could be added freely. The web industry developed an entire toolkit of performance workarounds: domain sharding (spreading assets across multiple domains to get more parallel connections), CSS sprites (combining many small images into one), resource concatenation (bundling JavaScript and CSS files), and resource inlining. Browsers opened up to 6 parallel TCP connections per origin to work around the single-connection bottleneck. These hacks were ugly but effective, and they let HTTP/1.1 scale far beyond what its designers might have expected.

But the workarounds had limits. The core problems remained: head-of-line blocking meant a slow response on one connection stalled everything behind it. Plain-text headers were sent redundantly with every request — cookies, user-agent strings, and other metadata often added 700–800 bytes of overhead per request, completely uncompressed. There was no multiplexing — HTTP/1.1’s text-based format made it impossible to interleave chunks from different resources on a single connection because the receiver couldn’t tell them apart. And the server couldn’t proactively push resources it knew the client would need. Someone needed to rethink the wire format.

SPDY proved that HTTP could be faster

That someone was Mike Belshe. A Cal Poly CS graduate who’d worked at Netscape and was one of the first engineers on Google Chrome, Belshe published a landmark research paper in April 2010 titled “More Bandwidth Doesn’t Matter (much).” His findings were striking: above 5 Mbps, increasing bandwidth barely improved page load times. But reducing latency always helped linearly — every 20ms reduction yielded a 7–15% improvement. The web’s bottleneck wasn’t the pipe’s width; it was how many round trips the protocol required.

On November 12, 2009, Belshe and his colleague Roberto Peon announced SPDY (pronounced “speedy”) on the Chromium blog. Initial tests showed page loads up to 55% faster than HTTP/1.1 in lab conditions. SPDY introduced the ideas that would define the next generation of HTTP: multiplexed streams over a single TCP connection, request prioritization, header compression, server push, and a binary framing layer — all while preserving full backward compatibility with HTTP semantics. Cookies, ETags, content negotiation — everything worked unchanged.

Google deployed SPDY aggressively. By 2011, Belshe reported at the O’Reilly Velocity conference that Google was using SPDY for roughly 99% of SSL connections between Chrome and Google services, with a 15%+ average speed improvement and “no property not performing better with SPDY.” Browsers followed: Chrome, Firefox, Opera, Internet Explorer 11, and eventually Safari all added support.

In February 2012, Belshe submitted SPDY to the IETF as a formal Internet Draft. The IETF’s httpbis working group had already issued a call for proposals for HTTP/2. They considered alternatives from Microsoft and others, but SPDY was chosen as the starting point. The formalization process would take three years.

HTTP/2 brought binary framing and true multiplexing

RFC 7540 was published on May 14, 2015, authored by Mike Belshe (now at BitGo, the cryptocurrency security company he’d co-founded), Roberto Peon (Google), and Martin Thomson (Mozilla) as editor. HTTP/2 was SPDY refined, hardened, and standard

The most fundamental change was the binary framing layer. HTTP/1.1 was a text-based protocol — human-readable but inefficient and ambiguous to parse (there were four different ways to determine message length). HTTP/2 splits all communication into binary frames, each tagged with a type (HEADERS, DATA, SETTINGS, PUSH_PROMISE) and a stream ID. Binary is more compact, faster to parse, and eliminates entire categories of parsing bugs.

Multiplexing was the headline feature. Multiple requests and responses flow as independent, interleaved streams over a single TCP connection. No more domain sharding. No more 6-connection limit workarounds. Google reported that with HTTP/1.1, 74% of connections carried just a single transaction; under HTTP/2, that dropped to 25%.

Roberto Peon’s HPACK header compression (published alongside as RFC 7541, co-authored with Hervé Ruellan) was a critical innovation. SPDY had used DEFLATE compression for headers, but researchers discovered this was vulnerable to CRIME attacks — compression oracle attacks where an attacker could inject data and infer secret header values by measuring compressed sizes. HPACK replaced dynamic stream compression with a fundamentally different design: a static table of 61 common header entries, a per-connection dynamic table updated incrementally, and fixed Huffman codes for literal values. Secure by design.

Server push let servers proactively send resources via PUSH_PROMISE frames — anticipating what clients would need before they asked. Stream prioritization enabled clients to declare the relative importance of different resources using a dependency tree model. Both features sounded great on paper, though in practice server push proved tricky to configure correctly (Chrome eventually deprecated support) and prioritization implementations varied widely across browsers and servers.

Adoption of HTTP/2 faced friction. All major browsers decided to implement HTTP/2 only over TLS (the h2 protocol identifier), making encryption de facto mandatory even though the RFC technically defined a cleartext mode (h2c). This meant sites had to adopt HTTPS first — a barrier that eased significantly when Let’s Encrypt launched in 2015–2016 with free TLS certificates. By late 2015, most major browsers supported HTTP/2. Server support followed: nginx in September 2015, Apache in October 2015, and major CDNs like Akamai and Cloudflare by December 2015. By 2020, roughly 50% of all web requests were served over HTTP/2.

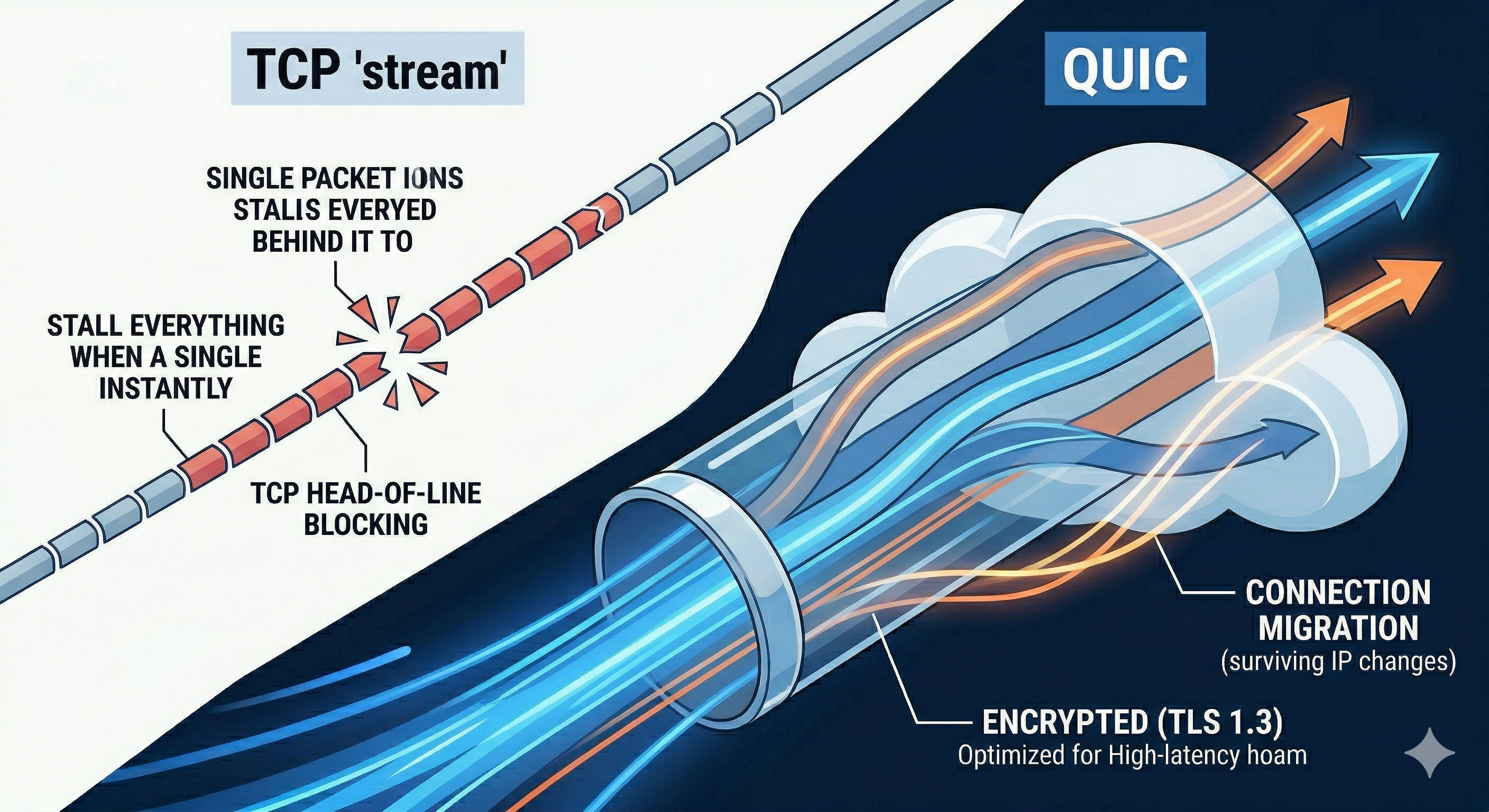

But HTTP/2 had a fundamental problem it couldn’t fix: TCP head-of-line blocking. HTTP/2 solved application-layer HOL blocking through multiplexing, but all those multiplexed streams still flowed through a single TCP connection. TCP guarantees in-order byte delivery — if one packet is lost, every subsequent byte is blocked until retransmission completes, even if those bytes belong to completely unrelated streams. At 2% packet loss, studies showed HTTP/1.1 with its 6 parallel connections could actually outperform HTTP/2, because only the affected connection stalled. The problem wasn’t HTTP anymore. The problem was TCP itself.

HTTP/3 and QUIC replace TCP altogether

The solution was radical: stop using TCP. In 2012, Google engineer Jim Roskind — a former Netscape chief architect with an MIT PhD and over 190 patents — designed a new transport protocol called QUIC (originally “Quick UDP Internet Connections,” though in IETF usage it’s just a name, not an acronym). QUIC runs over UDP and implements its own reliable, encrypted, multiplexed transport from scratch.

Google deployed QUIC internally in 2012, began Chrome experiments in 2013, and by 2017 it handled almost all Chrome-to-Google-services traffic. In their SIGCOMM 2017 paper, the Google team reported real results: 8% reduction in Google Search latency on desktop, 3.6% on mobile, and an 18% reduction in YouTube rebuffer rates on desktop. About 88% of QUIC connections achieved 0-RTT handshakes.

The IETF QUIC Working Group was formally established in October 2016 to standardize the protocol. The IETF version diverged significantly from Google’s original: standard TLS 1.3 replaced Google’s custom cryptographic handshake, the wire format was completely redesigned, and the architecture became modular. According to the QUIC Working Group (quicwg.org), the effort produced QUIC version 1 as “a UDP-based, stream-multiplexing, encrypted transport protocol.” Development spanned almost 5 years, 26 face-to-face meetings, and 1,749 GitHub issues. The core specs were published on May 27, 2021: RFC 9000 (QUIC Transport, co-edited by Jana Iyengar and Martin Thomson), RFC 9001 (TLS Integration), and RFC 9002 (Loss Detection and Congestion Control, co-edited by Jana Iyengar and Ian Swett). HTTP/3 itself — RFC 9114, edited by Mike Bishop of Akamai — followed on June 6, 2022. The name “HTTP/3” was proposed in October 2018 by Mark Nottingham, chair of both the QUIC and HTTP working groups, to “clearly identify it as another binding of HTTP semantics to the wire protocol.”

Here’s what HTTP/3 and QUIC actually change at the technical level. Stream-level independence is the big one: QUIC implements multiplexing at the transport layer, so each stream has its own ordering and loss recovery. A lost packet affects only the stream whose data it carried — every other stream keeps flowing. This eliminates TCP’s head-of-line blocking problem entirely.

Connection establishment is dramatically faster. A new TCP + TLS 1.2 connection takes 3 round trips. TCP + TLS 1.3 takes 2. A new QUIC connection takes just 1 round trip because it combines the transport and cryptographic handshakes into a single step. For returning connections, QUIC supports 0-RTT resumption — the client can send data immediately using cached session parameters, with no round trips at all (though 0-RTT data lacks replay protection, so only safe, idempotent requests should use it).

Encryption is mandatory and pervasive. QUIC integrates TLS 1.3 directly into the transport layer and encrypts nearly everything — including packet headers and connection state that would be visible plaintext in TCP. This improves privacy and resists protocol ossification, the phenomenon where middleboxes (firewalls, NATs, load balancers) develop hardcoded assumptions about protocol behavior that prevent future evolution.

Connection migration uses Connection IDs instead of TCP’s 4-tuple of source/destination IP and port. When your phone switches from Wi-Fi to cellular, the IP address changes — but the QUIC connection survives because it’s identified by a CID, not a network address. This matters enormously for mobile users moving between networks.

And because QUIC runs in userspace over UDP rather than in the OS kernel like TCP, implementations can be updated at application speed without waiting for kernel patches to propagate across billions of devices.

HTTP/3 uses QPACK (RFC 9204) instead of HTTP/2’s HPACK for header compression. QPACK decouples compression state updates from request/response data using dedicated unidirectional streams, preventing a subtle reintroduction of head-of-line blocking that could occur with HPACK’s strictly sequential decoding.

Real-world adoption has been strong. As of March 2026, HTTP/3 is used by 38.6% of all websites according to W3Techs. Major adopters include Google, Facebook, YouTube, Instagram, LinkedIn, Amazon, and ChatGPT. All major browsers have HTTP/3 enabled by default: Chrome since April 2020, Firefox since April 2021, Edge since November 2020, and Safari since Safari 16 in September 2024. A 2025 Catchpoint study measured a 41.8% reduction in median Time To First Byte with HTTP/3, with the largest gains on high-latency and lossy connections — exactly where TCP struggled most.

The QUIC Working Group continues to evolve the protocol. Active work includes Multipath QUIC (using multiple network paths simultaneously), qlog (a standardized logging schema for debugging), and extended acknowledgement mechanisms. The group’s most recent meeting was at IETF 125 in Shenzhen on March 19, 2026.

The people who made it all happen

This 35-year story is ultimately about a handful of engineers with deep convictions about how the internet should work. Tim Berners-Lee — the physicist who built ENQUIRE as a personal hypertext tool during a 1980 CERN contract, left, returned in 1984, and gave the world the web by Christmas 1990. He left CERN in 1994 to found the W3C, was knighted in 2004, and was honored at the 2012 Olympics opening ceremony. Roy Fielding — the California “beach bum” (his own description) who became the primary architect of HTTP/1.1, co-founded Apache, and formalized REST as an architectural style. Henrik Frystyk Nielsen — the Danish grad student who shared an office with the inventor of CSS, co-authored HTTP/1.0, and managed the development of HTTP/1.1 before moving to Microsoft and later Amazon.

Then the next generation. Mike Belshe — the Chrome engineer who proved bandwidth wasn’t the web’s bottleneck, co-created SPDY, pushed it through the IETF, and co-authored the HTTP/2 RFC. Roberto Peon — who co-created SPDY with Belshe and then designed HPACK, solving a critical security vulnerability in header compression. Jim Roskind — the veteran engineer (Netscape, Infoseek) who designed QUIC at Google in 2012, inducted into the National Cyber Security Hall of Fame in 2024. Jana Iyengar — who co-edited the core QUIC transport spec (RFC 9000), worked on transport protocols since 2000, moved from Google to Fastly, and has been working on QUIC since 2013. Ian Swett — Google’s QUIC Tech Lead since 2012, co-editor of the loss detection and congestion control RFC, and lead on BBRv2 congestion control.

Conclusion

HTTP’s evolution follows a clear pattern: each version solved the bottleneck created by its predecessor’s success. HTTP/0.9’s simplicity enabled explosive adoption but couldn’t carry anything beyond HTML. HTTP/1.0 added the vocabulary of headers and status codes but created a TCP connection per request. HTTP/1.1 fixed connection overhead and lasted 18 years on the strength of its extensibility — but its text-based, sequential nature couldn’t keep up with increasingly complex web pages. HTTP/2 introduced binary framing and true multiplexing but remained trapped by TCP’s in-order delivery guarantee. HTTP/3 finally broke free by replacing TCP itself with QUIC.

The most striking insight from this history is that protocol simplicity drives adoption, but real-world scale exposes the next constraint. Berners-Lee’s one-line GET protocol was just enough to prove the web could work. Thirty-five years and five versions later, HTTP/3 eliminates head-of-line blocking at every layer, establishes connections in zero round trips, encrypts everything by default, and survives network switches without dropping a packet. The current trajectory — with Multipath QUIC, WebTransport, and QUIC datagrams on the horizon — suggests we’re entering an era where the transport layer is no longer a constraint on application innovation but a programmable platform for it.

References

Primary Sources (RFCs and Specifications)

- RFC 1945 — Berners-Lee, T., Fielding, R., Frystyk, H. “Hypertext Transfer Protocol — HTTP/1.0.” May 1996. datatracker.ietf.org/doc/html/rfc1945

- RFC 2068 — Fielding, R., Gettys, J., Mogul, J., Frystyk, H., Berners-Lee, T. “Hypertext Transfer Protocol — HTTP/1.1.” January 1997. datatracker.ietf.org/doc/html/rfc2068

- RFC 2616 — Fielding, R., Gettys, J., Mogul, J., Frystyk, H., Masinter, L., Leach, P., Berners-Lee, T. “Hypertext Transfer Protocol — HTTP/1.1.” June 1999. datatracker.ietf.org/doc/html/rfc2616

- RFC 7230–7235 — Fielding, R., Reschke, J. (eds.) “HTTP/1.1” (six-part reorganization). June 2014. datatracker.ietf.org/doc/html/rfc7230

- RFC 7540 — Belshe, M., Peon, R., Thomson, M. (ed.) “Hypertext Transfer Protocol Version 2 (HTTP/2).” May 2015. datatracker.ietf.org/doc/html/rfc7540

- RFC 7541 — Peon, R., Ruellan, H. “HPACK: Header Compression for HTTP/2.” May 2015. datatracker.ietf.org/doc/html/rfc7541

- RFC 9000 — Iyengar, J. (ed.), Thomson, M. (ed.) “QUIC: A UDP-Based Multiplexed and Secure Transport.” May 2021. datatracker.ietf.org/doc/html/rfc9000

- RFC 9001 — Thomson, M. (ed.), Turner, S. (ed.) “Using TLS to Secure QUIC.” May 2021. datatracker.ietf.org/doc/html/rfc9001

- RFC 9002 — Iyengar, J. (ed.), Swett, I. (ed.) “QUIC Loss Detection and Congestion Control.” May 2021. datatracker.ietf.org/doc/html/rfc9002

- RFC 9114 — Bishop, M. (ed.) “HTTP/3.” June 2022. datatracker.ietf.org/doc/html/rfc9114

- RFC 9204 — Krasic, C., Bishop, M., Frindell, A. (eds.) “QPACK: Field Compression for HTTP/3.” June 2022. datatracker.ietf.org/doc/html/rfc9204

Original Proposals and Dissertations

- Berners-Lee, T. “Information Management: A Proposal.” CERN, March 1989. w3.org/History/1989/proposal.html

- Fielding, R.T. “Architectural Styles and the Design of Network-based Software Architectures.” Doctoral dissertation, University of California, Irvine, 2000. ics.uci.edu/~fielding/pubs/dissertation/top.htm

- Belshe, M. “More Bandwidth Doesn’t Matter (much).” April 2010. belshe.com/2010/05/24/more-bandwidth-doesnt-matter-much/

Research Papers

- Langley, A., Riddoch, A., Wilk, A., et al. “The QUIC Transport Protocol: Design and Internet-Scale Deployment.” SIGCOMM 2017, ACM. dl.acm.org/doi/10.1145/3098822.3098842

Documentation and Articles

- CERN — “A Short History of the Web.” home.cern/science/computing/birth-web/short-history-web

- World Wide Web Foundation — “History of the Web.” webfoundation.org/about/vision/history-of-the-web/

- MDN Web Docs — “Evolution of HTTP.” Mozilla. developer.mozilla.org/en-US/docs/Web/HTTP/Guides/Evolution_of_HTTP

- Grigorik, I. “Brief History of HTTP.” High Performance Browser Networking, O’Reilly. hpbn.co/brief-history-of-http/

- Stenberg, D. “RFC 7540 is HTTP/2.” May 2015. daniel.haxx.se/blog/2015/05/15/rfc-7540-is-http2/

- Stenberg, D. HTTP/3 Explained. http3-explained.haxx.se

- HTTP/2 FAQ — IETF httpbis Working Group. http2.github.io/faq/

- QUIC Working Group — IETF. quicwg.org

- http.dev — “HTTP/3.” http.dev/3