The AI Engineering Gap

There is a question I keep coming back to, one that nobody in the room seems to want to ask: If AI projects are so transformative, why do over 80% of them fail to deliver meaningful value?

That failure rate — nearly double that of traditional IT projects — is not a technology problem. The models work. The APIs are available. The cloud infrastructure is mature. What’s failing is everything around the model: the architecture, the integration, the operational discipline, the engineering.

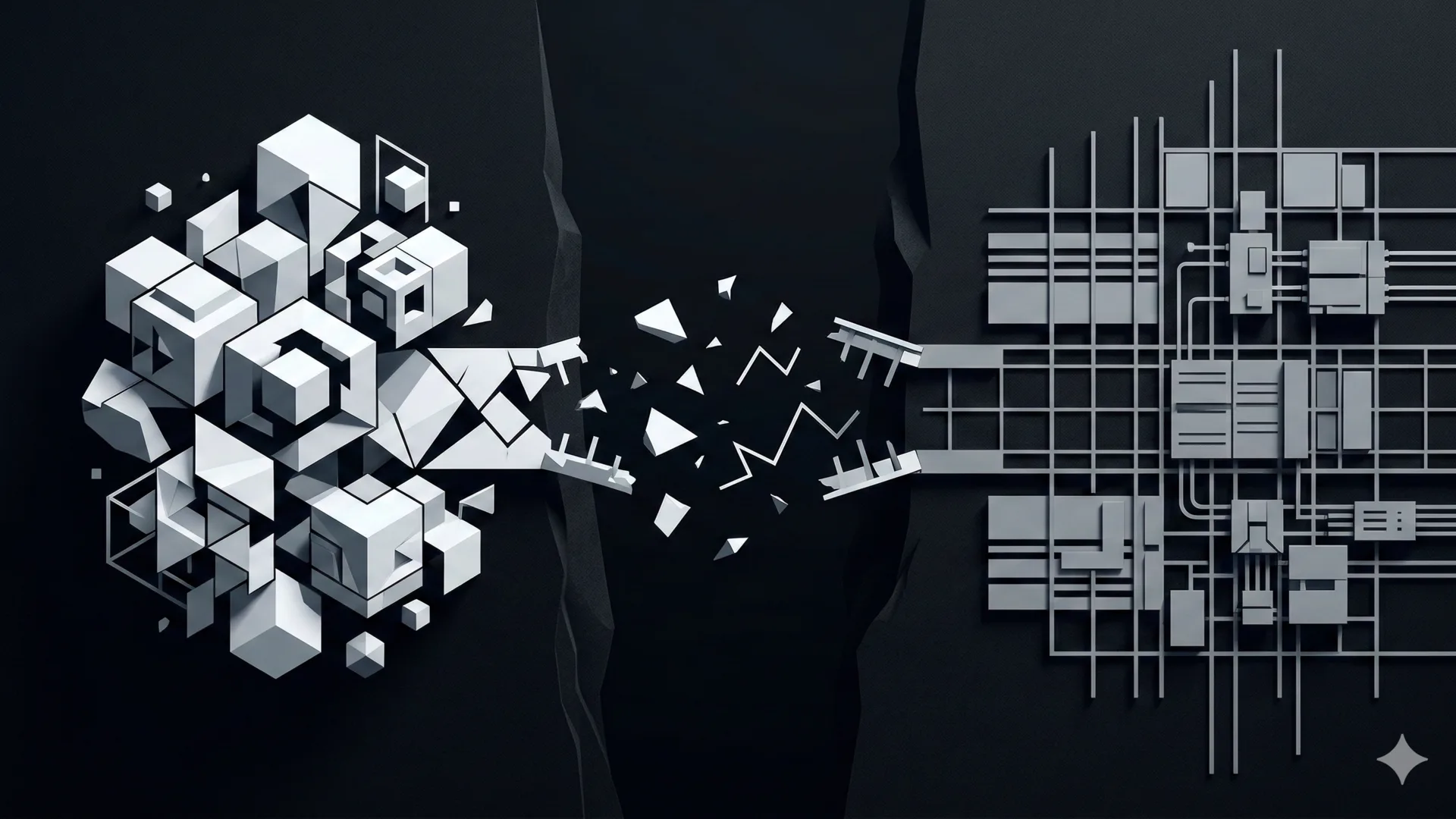

I call this the Engineering Gap: the dangerous disconnect between the rapid adoption of AI capabilities and the fundamental software engineering discipline required to make those capabilities reliable, safe, and production-worthy.

And the most troubling part? Almost nobody is talking about it. Because the interface to AI is natural language — something every human already knows how to use — we have collectively convinced ourselves that the underlying engineering must be just as simple.

It is not. It is, in fact, harder than anything most organizations have ever built.

The Most Dangerous Illusion of the AI Era

Here is the core of the problem: the belief that because the interface is easy, the engineering is simple.

When a product manager opens ChatGPT and gets a coherent answer in seconds, the mental model that forms is: “This is easy. We just need to connect this to our systems.” That mental model then cascades through the organization — into roadmaps, budgets, hiring decisions, and timelines. “Integrate AI by Q3 or lose market share.” The C-Suite mandate is clear. The understanding of what that mandate actually requires? Almost nonexistent.

What follows is predictable. Teams of smart, enthusiastic people — often from data science, statistics, or prompt engineering backgrounds — begin building what are essentially fragile prompt-wrappers held together by optimism. They work brilliantly in demos. They collapse in production.

This is not a new pattern. In the late 1960s, the software industry hit an eerily similar wall. Hardware had advanced so quickly that software couldn’t keep up. Projects were chronically late, over budget, and unreliable. The field called it the Software Crisis, and it was serious enough that NATO convened a conference in 1968 specifically to address it. That conference is where the term “software engineering” was born — a deliberate provocation, meant to force the industry to accept that building software requires the same rigor as building bridges.

We are in a second Software Crisis now. And we’re making the same mistake: treating an engineering challenge as if it were merely a tooling challenge.

The Reliability Paradox: Stochastic Brain, Deterministic Body

If there is one concept I wish I could install in the mind of every executive, product owner, and AI enthusiast making decisions about production systems, it is this:

The more powerful and unpredictable your AI component is, the more rigid and disciplined the system around it must be.

I call this the Reliability Paradox. It is counterintuitive, and that’s precisely why it’s so dangerous.

An LLM is, by nature, stochastic — it’s probabilistic, non-deterministic, and will give you a different answer to the same question depending on the day. That is what makes it powerful. But it also means that every other part of your system — the data pipelines, the access controls, the error handling, the monitoring, the fallback logic — must be deterministic. Predictable. Tested. Engineered with the same discipline you’d apply to a financial trading system or an aircraft control module.

Google’s research team identified this structural tension years ago in their landmark paper on hidden technical debt in machine learning systems. Their central finding still holds, and it’s even more relevant in the LLM era: the actual ML code in a production system typically represents only about 5% of the total codebase. The other 95% — the data pipelines, serving infrastructure, feature engineering, configuration management, monitoring — is where the real complexity lives. And it is where 95% of the technical debt silently accumulates.

They also identified a principle that every AI team should have tattooed on their wall: CACE — Changing Anything Changes Everything. In a machine learning system, modifying one input feature, one hyperparameter, one data source can cascade through the entire model in ways that are nearly impossible to predict or isolate. Traditional software engineering relies on encapsulation and modularity — clean boundaries between components. ML systems erode those boundaries by design.

Now multiply that complexity by the scale of a modern LLM deployment, add the unpredictability of natural language inputs, and connect it to your mission-critical business systems. Without disciplined architecture, what you have built is not an AI solution. It is a “while-loop with a credit card” — an autonomous process burning resources with no guardrails, no kill switch, and no one who fully understands what it’s doing.

If you think that’s hyperbole, consider Knight Capital in 2012: a single rogue algorithm, deployed without proper architectural safeguards, wiped out $440 million in 45 minutes. Now imagine that algorithm could also improvise.

The Legacy Paradox: The Body We Haven’t Mapped

There is an irony at the heart of the AI revolution that I find almost poetic in its absurdity.

The systems we are now rushing to label as “legacy” — the mainframes, the COBOL programs, the SQL databases, the monolithic ERPs — are the only reason we have AI at all.

Think about it. Where did the data come from? The decades of transactional records, customer histories, process logs, financial flows, supply chain events — all of it was captured, stored, and maintained by the very systems we now dismiss as outdated. Without that data, an LLM has nothing to reason about. A recommendation engine has nothing to recommend. A predictive model has nothing to predict.

We are trying to put a “brain” onto a “body” that we haven’t even fully mapped yet.

The narrative in boardrooms is that AI will replace legacy systems. This is an architectural delusion. Those systems are not just old code — they are Systems of Record. They hold the truth of the organization: who bought what, who owes whom, what the regulatory obligations are. They are the operational backbone. And they are deeply, intricately complex — not because their builders were unskilled, but because the real world is complex, and these systems absorbed decades of that complexity into their logic.

The world didn’t survive Y2K because we replaced everything with modern code. It survived because senior engineers spent years patiently mapping the deep, tangled logic of legacy systems and fixing what needed fixing. That was an architectural feat of enormous discipline.

Today, we face a harder version of the same challenge: integrating stochastic, unpredictable AI with deterministic, rigid legacy systems — without a bridge, without a map, and increasingly, without the people who built the map in the first place.

If your legacy data is siloed, undocumented, or poorly understood, your AI will not compensate for that. It will hallucinate at scale. You cannot build a “System of Intelligence” if your “System of Record” is a black box.

The Human Tragedy: Firing the Interpreters

This brings me to the part that is hardest to write, because it is not about technology. It is about people.

Across the industry, senior engineers and architects — the people who understand concurrency, memory management, distributed systems, the deep “why” behind decades of design decisions — are being laid off. The reasoning is blunt: “AI can code now.”

This is a catastrophic misunderstanding of what these people actually do.

An AI coding assistant can write a function. It cannot explain why a particular database lock was implemented in a 20-year-old monolith, or why a seemingly redundant validation step exists in a payment processing pipeline, or why a configuration flag that appears useless actually prevents a cascade failure that happened once, seven years ago, at 2 AM on a holiday weekend.

There is a principle in philosophy called Chesterton’s Fence: never tear down a fence until you understand why it was built. In software, this translates to a simple rule — never remove code, never bypass a check, never “modernize” a system until you understand the intent behind its current design. That intent lives not in documentation (which is almost always stale) but in the minds of the people who built and maintained these systems.

When you fire those people, you don’t just lose employees. You lose the systemic immune system of your organization. You lose the institutional memory that protects you from repeating catastrophic mistakes. And you lose it at the exact moment you need it most — when you’re trying to connect your most critical systems to a technology that, by its very nature, is unpredictable.

The data is already confirming this. Reports indicate that a significant portion of organizations that laid off workers in the name of AI have come to regret it, and many of those workers are being quietly rehired — at higher cost, with damaged trust, after irreplaceable knowledge has already walked out the door.

This isn’t just a workforce issue. It’s a strategic blind spot of historic proportions. We are firing the interpreters — the only people who can read the map of the body we’re trying to give a brain.

IBM Watson: A $4 Billion Lesson We Haven’t Learned

If you want a concrete example of what the Engineering Gap looks like at scale, look no further than IBM Watson Health.

IBM invested roughly $4 billion positioning Watson for Oncology as the future of healthcare AI. The technology was real. The ambition was genuine. And the project failed — not because the AI wasn’t smart enough, but because the engineering wasn’t disciplined enough.

Watson couldn’t reliably access live patient data from hospital EHR systems. When hospitals migrated to new platforms, Watson simply stopped working — a multi-million dollar investment reduced to a custom demo. The system was frequently trained on hypothetical patient cases from a single institution rather than on real, diverse clinical data — an architectural decision that guaranteed bias. And perhaps most damning, the marketing consistently outpaced the engineering. What was sold as a production-ready clinical tool was, architecturally, still a research prototype.

Watson didn’t fail because AI doesn’t work. Watson failed because nobody built the bridge between what the model could do and what the real-world system needed to be. The Engineering Gap consumed $4 billion and set healthcare AI back by years.

So What Do We Actually Do?

If you’ve read this far, you might expect me to pitch a specific tool or framework. I’m not going to do that — at least not yet.

Because before any tool can help, the mindset has to change.

The shift is this: stop thinking of AI projects as AI projects. They are software projects that happen to include an AI component. And that component — powerful as it is — is the most unpredictable part of your system, which means everything else must be built with even greater discipline than before.

This means treating AI with the engineering rigor we apply to any mission-critical system: proper requirements engineering so that stakeholder intent is translated into verifiable outcomes. Software architecture that serves as the blueprint for separating concerns, managing failure, and enabling change. Configuration management that versions not just code but models, data, and parameters — because reproducibility is not optional. Testing strategies that account for non-deterministic outputs. And operational practices that monitor for model drift, cost overruns, and degradation over time.

These are not new ideas. They are the accumulated wisdom of decades of software engineering — formalized in bodies of knowledge like SWEBOK, battle-tested through countless failures, and more relevant today than they have ever been.

You don’t need AI enthusiasts building your production systems. You need engineers who are skeptical of the magic and obsessed with the plumbing.

What Comes Next

This post is the first in a series. The Engineering Gap is the diagnosis. In the posts that follow, I’ll get into the architecture — specifically, one of the most critical and most overlooked layers in the modern AI stack: the AI Gateway.

If your organization is running AI workloads in production — or planning to — without a dedicated governance layer between your applications and your model providers, you are flying blind. An AI Gateway is where the principles discussed in this post become concrete: rate limiting, cost control, prompt management, fallback routing, semantic caching, audit trails, and circuit breakers.

We’ll do a deep dive into what an AI Gateway actually is, why it’s different from a traditional API gateway, and how solutions like commercial AI Gateways and LiteLLM approach the problem differently. We’ll look at real architectural patterns, trade-offs, and decision frameworks.

Because the gap isn’t going to close itself. Someone has to build the bridge.

And it has to be an engineer.